An IT team’s worst nightmare happened earlier this month: Google Cloud accidentally deleted an Australian pension fund worth $135 billion. That data wipe included all the backups stored on the service, leaving UniSuper in an impossibly difficult situation. Fortunately, they had backups with a different provider, allowing them to restore services after two weeks. However, if their DevOps team hadn’t planned for redundancy, that story would have ended differently.

What Is DevOps?

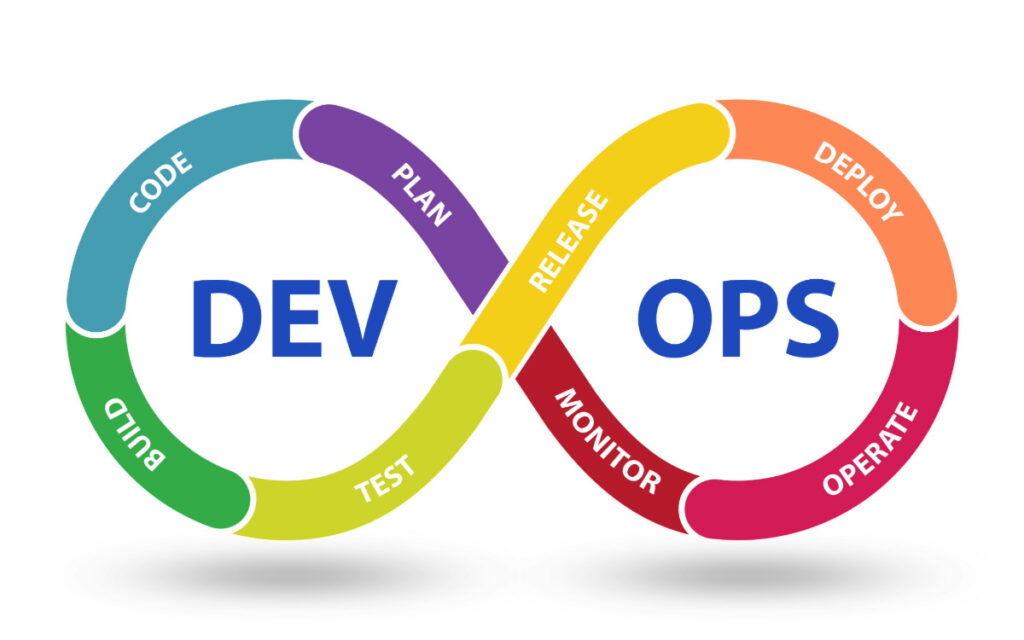

DevOps is a set of practices and cultural philosophies that combines software development (Dev) and IT operations (Ops) to shorten the development life cycle and deliver high-quality software. This approach emphasizes collaboration between development and operations teams, automation of processes, and continuous improvement. The aim is to increase the speed and efficiency of delivering applications and services.

The Value of Data Backups

Data backups are essential as organizations have mostly shifted to digital recordkeeping. Data can include everything from customer records to critical information required for day-to-day operations. Yet, while most companies have a disaster recovery plan, only 24% have a routinely tested, updated, and well-documented plan. That means in the face of a data failure incident, many businesses simply aren’t ready.

How DevOps Redundancy Works

DevOps redundancy ensures critical systems operate smoothly, even if one part fails. Here’s a quick overview of how redundancy works within a DevOps setting:

Multiple Copies

This type of redundancy involves setting up multiple copies of environments, like servers or databases. If one server goes down, another can immediately take over, keeping the service running without interruptions.

Data Replication

Essential data is duplicated across different storage systems, meaning a backup is always ready for recovery. Depending on the system setup, that can happen simultaneously at multiple locations or with slight delays.

Load Balancing

This technique distributes incoming traffic across several servers to prevent any single server from overloading. If a server fails, the load balancer redirects traffic to the remaining active servers, maintaining service availability.

Automatic Switching

The system is designed to automatically transfer operations to a backup system without human intervention if it detects a problem. This immediate response helps to minimize any potential disruptions.

Regular Testing

All redundancy systems are regularly tested and maintained to work when needed. This proactive approach helps catch and fix issues before they can cause a disruption.

The Danger of Not Having Redundancy With DevOps

As highlighted by the incident with UniSuper, relying on only one data storage service can have unforeseen consequences. Even though they had multiple backups within Google’s unified platform, an accidental deletion of their account wiped everything they had stored. Any organization relying solely on one service for its data and backups is vulnerable in the same way.

Service Downtime Can Be Costly

Even though the Australian pension fund was able to restore its systems using backups, it experienced downtime from May 2nd to May 15th. The cost of downtime can’t be ignored, as it had to process a two-week backlog of requests and payments, leading to frustrated customers. While 83% of IT decision-makers say up to 12 hours of downtime is acceptable, it was far slower. However, the damage would have been much larger without the redundancy.

If Google could not recover the lost data, UniSuper would have to rely on other records like member statements, email transactions, and physical documents. Even then, there would likely be no way to restore it fully for all 647,000 members. Due to that, they would likely have faced excessive fines and legal costs for their mismanagement of assets. Reputation damage can also have long-lasting consequences, which is why minimizing downtime should always be a priority.

Building DevOps Redundancy With Effective Backup Practices

Building redundancy in DevOps with effective backup practices is crucial for ensuring your operations can withstand failures and data loss incidents. Here are some ways you can integrate backup practices to improve system redundancy:

- Diversify Backup Solutions: Securely store backups using more than one solution. If one backup or service fails, there will still be a copy elsewhere.

- Automate Backup Processes: Use scripts and automation tools to schedule and manage backups efficiently. That can help ensure data is consistently protected.

- Use Incremental Backups: Employ incremental backups to save only changes since the last backup session, reducing storage needs and speeding up the backup process.

- Regular Testing and Validation: Conduct routine tests to ensure backups are complete and data can be fully restored. Testing helps identify potential issues early.

- Secure Backup Data: Ensure all backups are encrypted and stored to prevent unauthorized access. Security should be in place for both data at rest and in transit.

- Align with Disaster Recovery: Align backup practices with your organization’s disaster planning, outlining specific roles and actions for handling backups in different scenarios.

Focusing on these key practices can effectively build redundancy into your DevOps operations. Doing so will help protect your systems and make service disruptions quicker to recover from.

How To Choose the Right Backup Tools

Selecting the appropriate backup tools is crucial for ensuring your data is protected, and your operations can recover swiftly from disruptions. Here are a few steps you can take to guide you to the right choice:

1. Assess Your Business Needs

Evaluate your business’s requirements in terms of data size and system complexity. Also, consider how quickly you need to recover data (RTO) and how current the data must be (RPO).

2. Storing Your Backups

There are a few types of backup solutions to consider:

- On-Site Backups: Quick access, stored on-premises, but risk of physical damage.

- Off-Site Backups: Stored physically away from where the data is being used.

- Cloud-Based Backups: Off-site, scalable, and accessible from anywhere.

- Hybrid Backups: Combines local and cloud advantages for broader protection.

Make sure all of the backups aren’t stored in the same place. That will ensure they can’t all fail at once.

3. Consider the Features

When choosing a backup tool, consider what features are crucial for your organization’s needs. Some of those can include:

Automation: Streamlines backup processes for consistent data protection.

Scalability: Adjusts to your growing data needs without performance loss.

Security: Uses strong encryption and meets compliance regulations.

Ease of Use: Has an easy-to-use interface and set of features for your IT team.

When in doubt, an IT consultation is a great way to get extra insight and support when determining a backup strategy.

4. Vendor Reputation and Support

Opt for vendors known for reliability and excellent customer service. Trial the solution to ensure it integrates smoothly with your systems.

5. Cost and Future Proofing

Weigh the costs against the features offered. Consider future business changes and ensure the backup solution can adapt to growing needs.

ITonDemand: Ensuring Data Reliability Through Redundancy

ITonDemand uses redundancy through a variety of backup and monitoring tools, partnering with solutions like Veeam to ensure data is well managed. The pension fund incident shows that relying too much on one solution can backfire. We also understand that data is one of any company’s most valuable assets. That’s why all our service packages include backups and disaster recovery. Don’t leave the safety and reliability of your data up to chance.

Are you concerned about your data backup strategy? Reach out via our contact form or give us a call to learn more about our services: 1-800-297-8293